|

5/20/2023 0 Comments Qlab midi remote

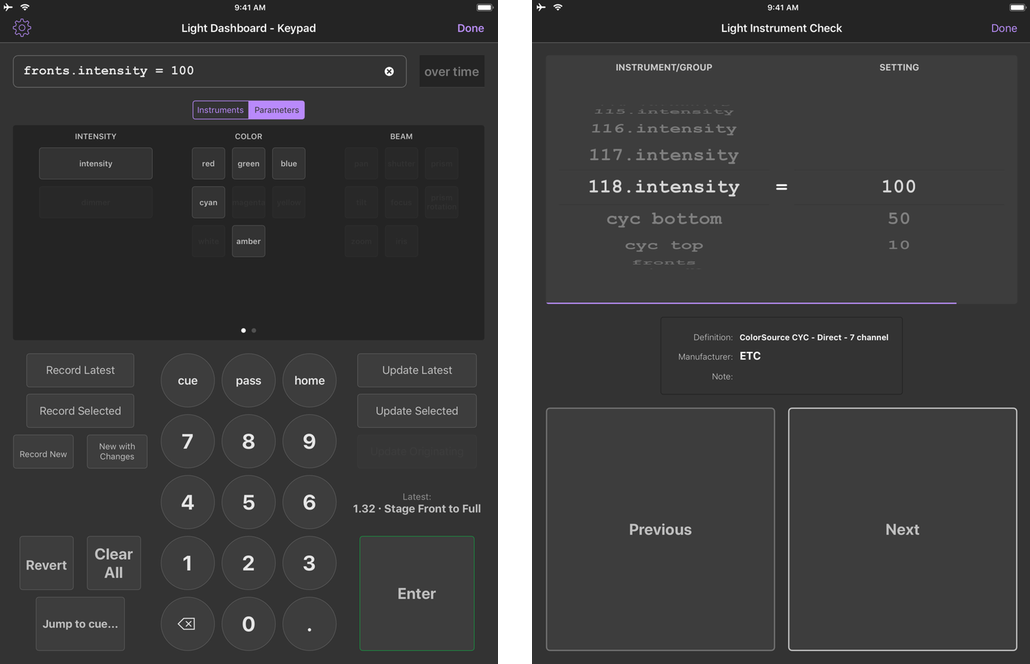

You can have many clients connected to the server simultaneously, and it’s possible to run the server headless. Here’s the Open Stage Control panel running in its own GUI:Īnd here it is on a Chrome browser on an iPad. Each of 8 notes of the C major Scale C-C are on separate tracks. The workspace contains a main cue list with 3 cues in a fire all groupĮach cue is an 8 track WAV file of an instrument with repeated notes. Here is the QLab workspace we are going to control. Here’s it is in action (Best Viewed full screen):

It also displays the number and name of the current cue. This project uses Open-Stage-Control to synchronise a fader bank remote running on any of the devices listed above with the sliders in the selected cue in QLab. This project is going to use an almost identical workspace to that used in this QLab Cook Book chapter In fact, this project relies on a feature that did not exist, that Jean-Emmanuel incorporated by coding a custom module in JavaScript within a day of requesting it. The Developer, Jean-Emmanuel is very responsive. It’s software that is very much in development, it seems very stable, but you would need to test it thoroughly for your application. Currently, this includes Windows 7 or later, MacOS 10.10 or later, Linux v2.24 or later, iOS10 or later, Android Jelly Bean or later. What this means is that once you have it up and running, you can log in to the server from a Google Chrome web browser on any device that supports it. It’s built on web technologies and run as an Electron web server that accepts any number of clients. Open Stage Control is a desktop OSC bi-directional control surface application. In addition to QLab, it uses the following software which can be downloaded without charge, using the links below. It opens up the possibilities of creating control panels on cheap tablets costing less than 50 dollars. However you can't do this on an audio track.This chapter looks at remote control of QLab, using different operating systems on a range of devices. This brings up another question though - how should I go about automating the devices on an audio track? I know with MIDI tracks you can inset a clip (i'm using clip view by the way) onto the MIDI track and then draw in device on/off automation. If you are using multiple controllers then you might need to put some thought into MIDI channels to avoid unpleasant clashes.Īh this does indeed work nicely. fire the MIDI cue in QLab, Live should then capture the value of the incoming MIDI message (it should appear in the MIDI mapping browser). in QLab, choose a MIDI note or CC in a MIDI cue

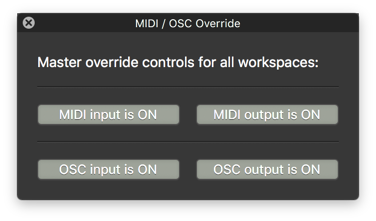

put Live in MIDI mapping mode, then click on the control that you would like to map to You get Live to capture the incoming MIDI message while in MIDI mapping mode, just like when mapping controls from a hardware device. I'll give that a shot - how to I go about mapping though? If Ableton is receiving MIDI info from another program, I can exactly just select the note and MIDI map it - would I need to somehow capture the incoming note? you can now map MIDI notes or CCs coming out of QLab (via the IAC bus) to the device on/off switches of your devices, etc. In the Live MIDI prefs, turn on 'Remote' for input from the IAC bus. In QLab, you can now send MIDI triggers to the IAC bus. Open up Audio MIDI Setup and enable the IAC bus. You don't need to mess with hardware MIDI routing, as OS X has a built-in software MIDI system which you can use to route MIDI between applications-the IAC bus. During a specific part of a song (the bridge), I'd like to apply effects/EQ & so on to my vocal mic track within Ableton - since i'm not using clips to play the backing tracks (those are within QLab), is there a way to essentially send information from QLab (with either a MIDI/timecode track etc), and use that to tell Ableton when to turn on/off FX that I have on my vocal mic audio track?

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed